![D] Is there a model similar to CLIP but for images only dataset, instead of (image, text) pairs? : r/MachineLearning D] Is there a model similar to CLIP but for images only dataset, instead of (image, text) pairs? : r/MachineLearning](https://preview.redd.it/uck7e6cwo3j81.png?width=1944&format=png&auto=webp&s=19f1d44fbda5516e579568f9407981429f801f72)

D] Is there a model similar to CLIP but for images only dataset, instead of (image, text) pairs? : r/MachineLearning

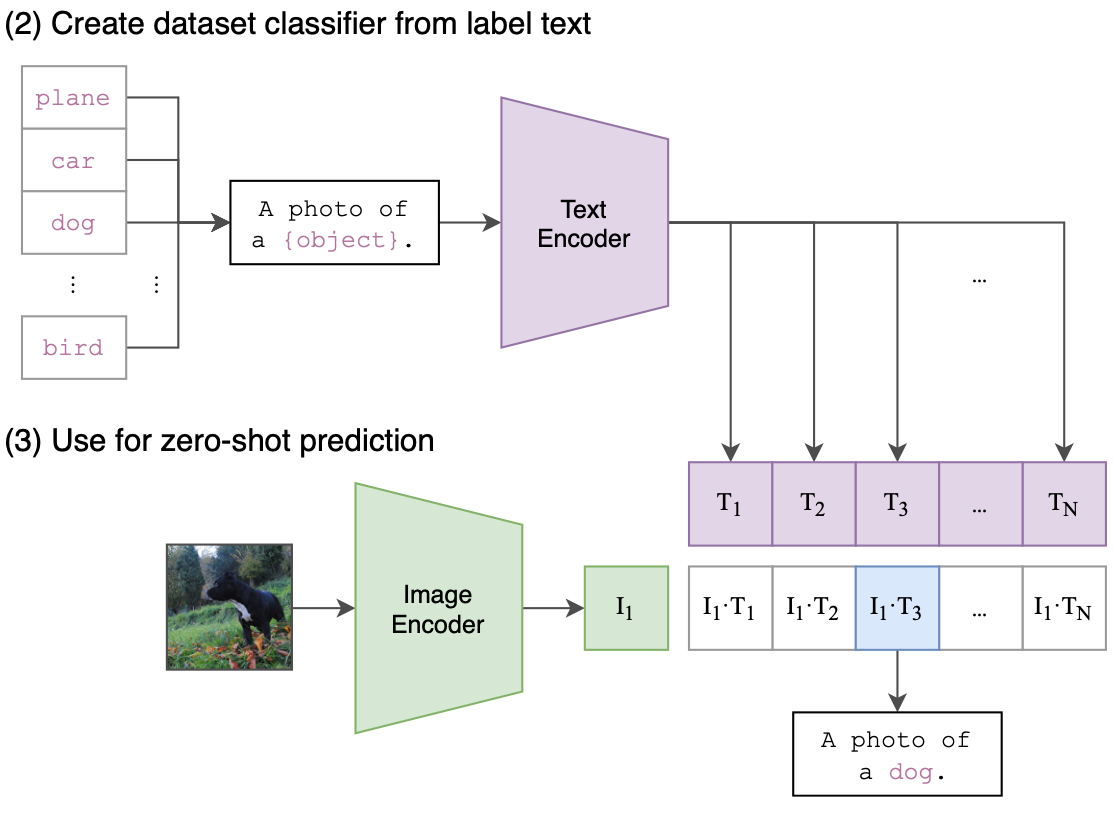

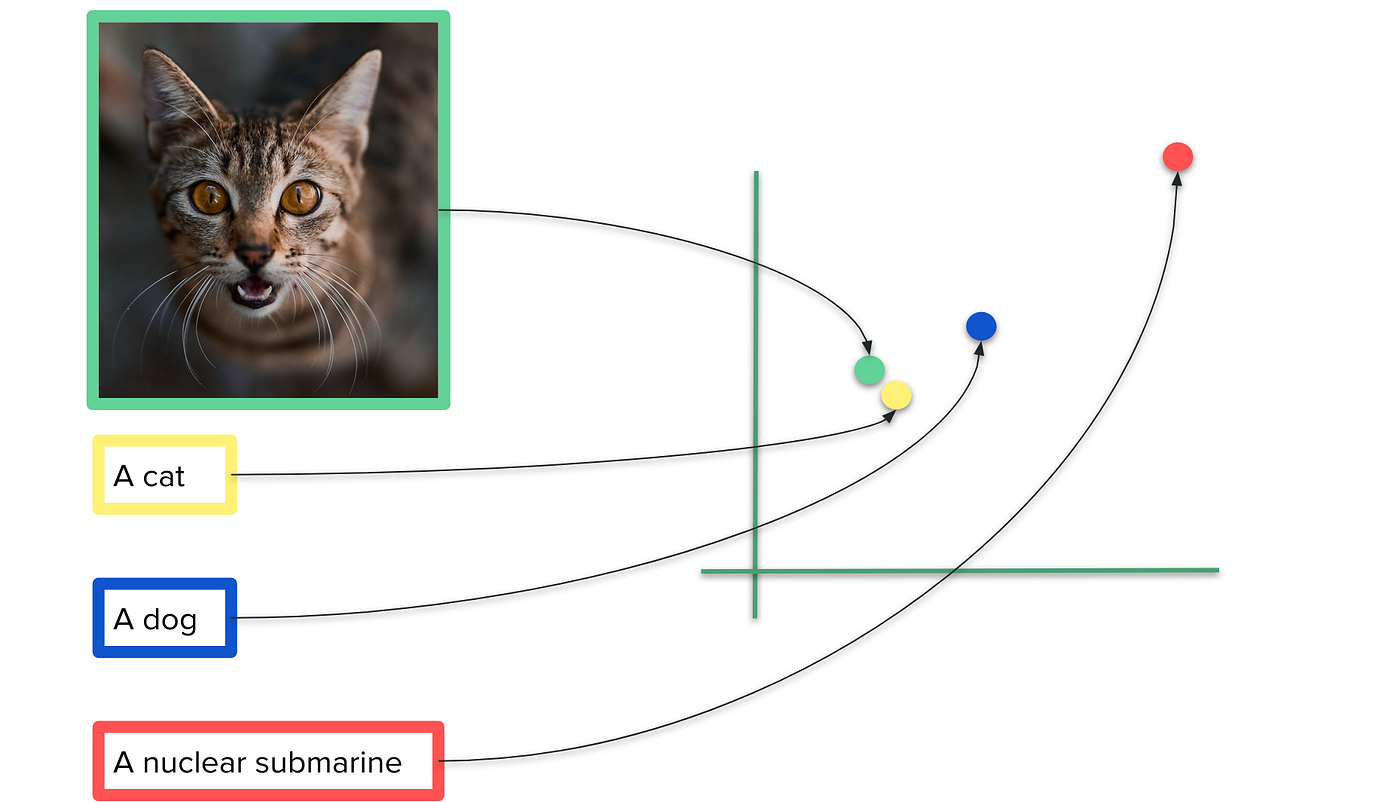

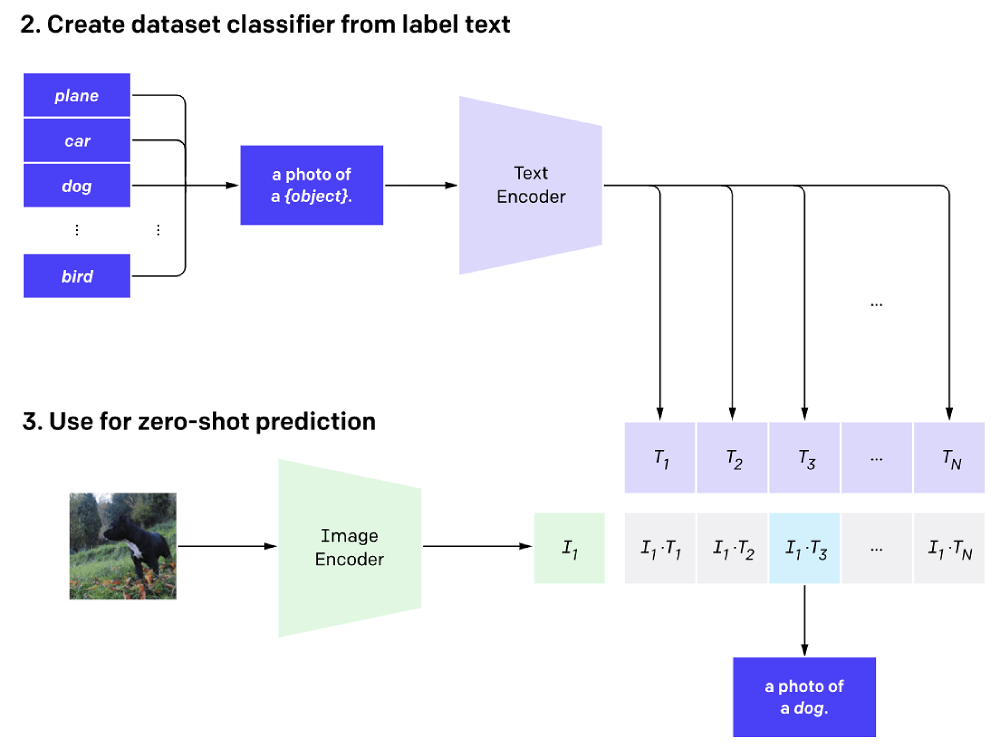

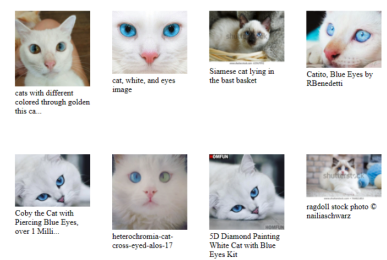

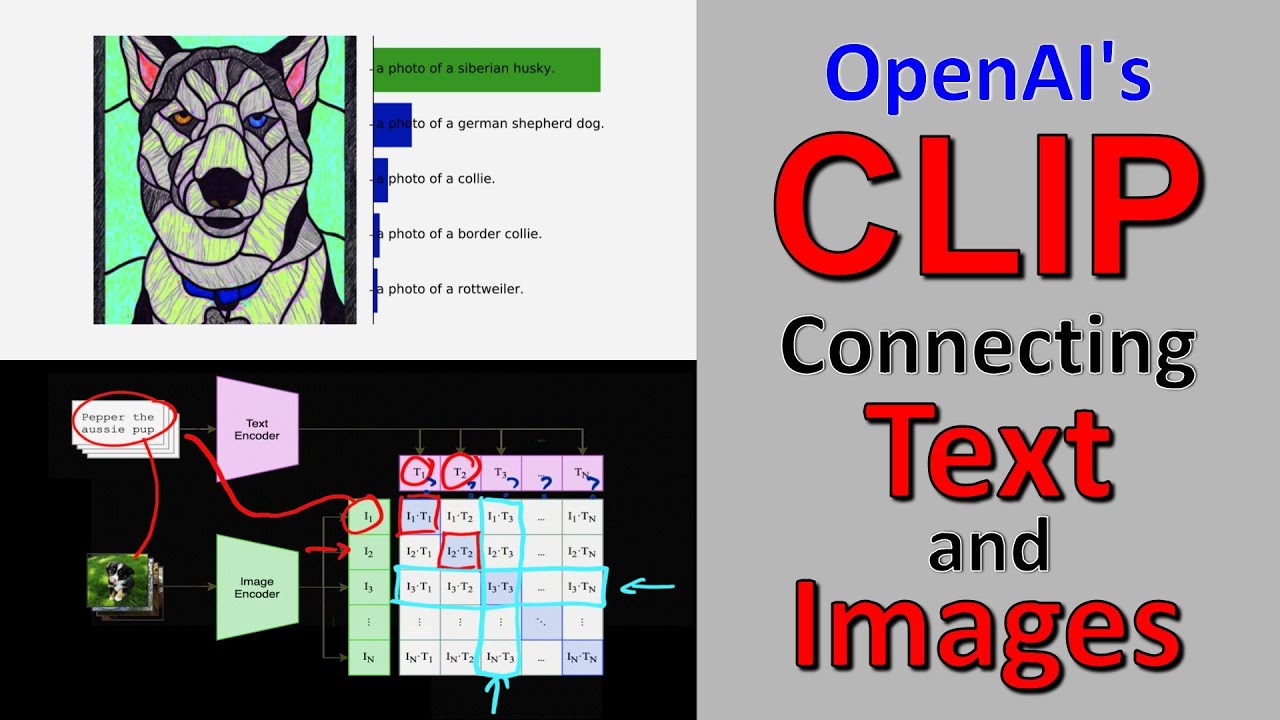

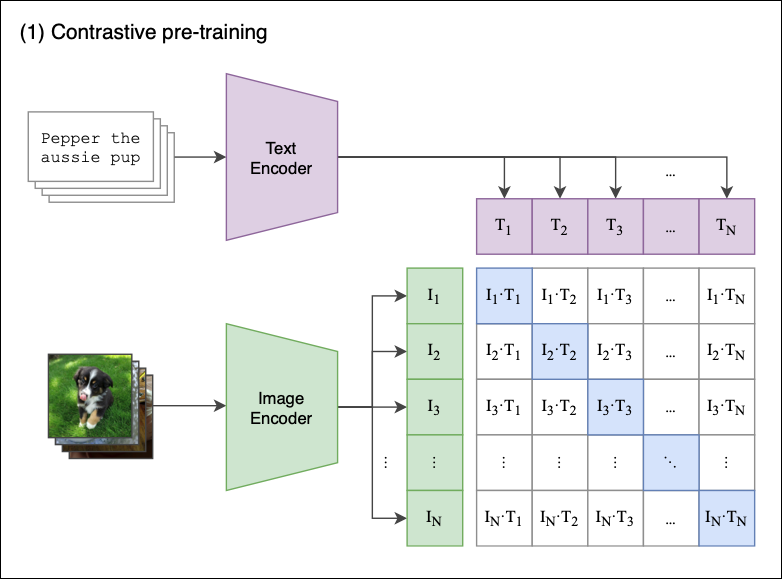

CLIP: The Most Influential AI Model From OpenAI — And How To Use It | by Nikos Kafritsas | Towards Data Science

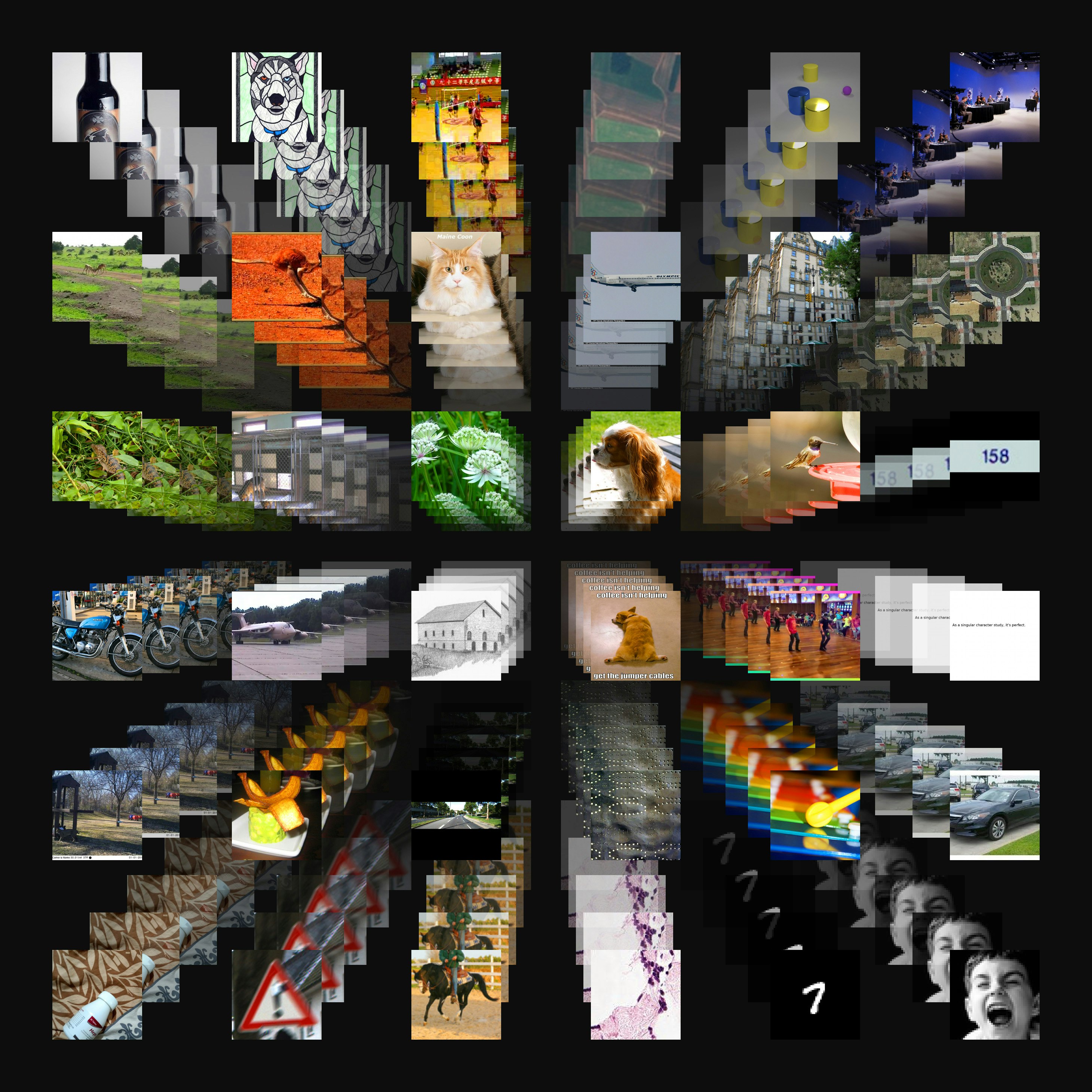

Aran Komatsuzaki on Twitter: "+ our own CLIP ViT-B/32 model trained on LAION-400M that matches the performance of OpenaI's CLIP ViT-B/32 (as a taste of much bigger CLIP models to come). search